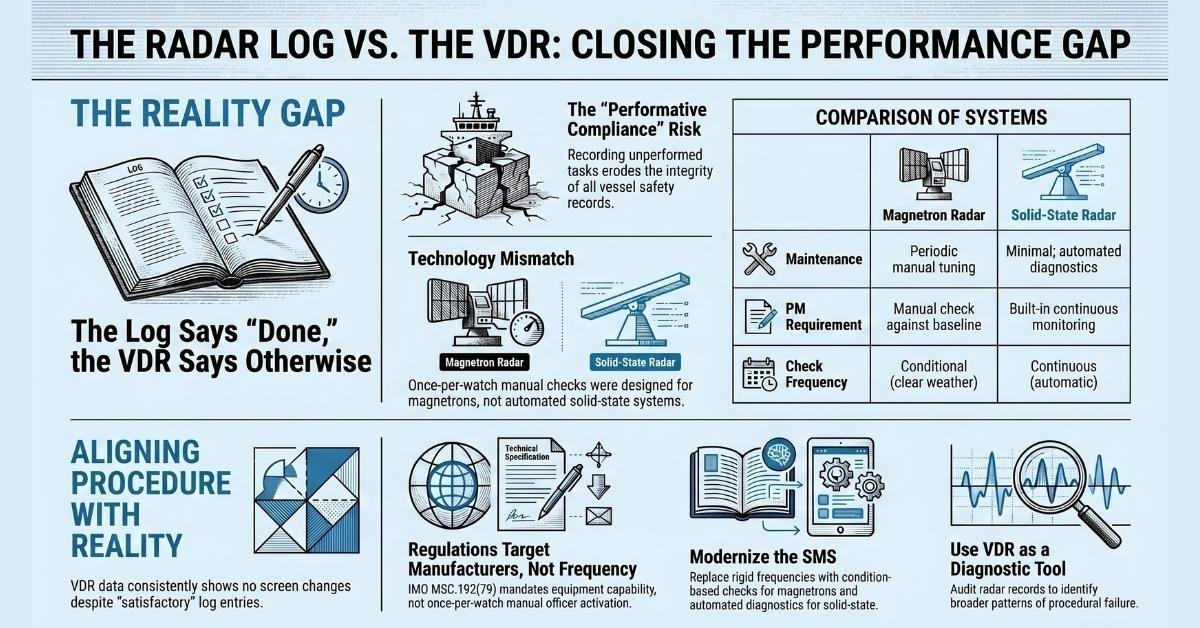

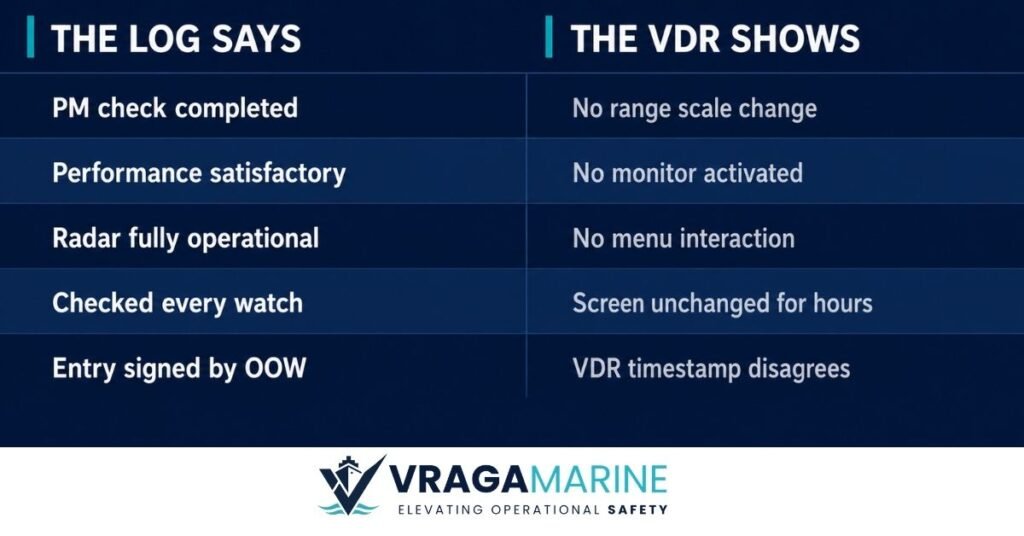

The radar performance monitor ship check is one of the most consistently recorded entries in a vessel’s radar log. It is also, based on VDR data across hundreds of audits, one of the most consistently unperformed.

The screen does not change. There is no range scale shift. No activation sequence. The display carries on — targets plotted, clutter suppressed, the watch proceeding exactly as before. And yet the column reads: PM check — satisfactory.

I am not making a point about individual officers. The procedure as written is the problem. It has been inserted into SMS manuals and standing orders across the industry without anyone seriously asking two questions: what does it require of a navigator, and is the frequency imposed on it grounded in anything?

The VDR does not care what the log says. It recorded what the screen showed

What regulation requires on radar performance monitor ship checks

SOLAS Chapter V, Regulation 19 mandates the carriage, installation, and maintenance of radar equipment. Paragraph 4 states that navigational systems “shall be so installed, tested and maintained as to minimize malfunction.” That is the entirety of the operational maintenance obligation in the convention. No frequency. No requirement to activate any specific function during a watch.

IMO Resolution MSC.192(79) — the document most SMS manuals cite — is titled Adoption of the Revised Performance Standards for Radar Equipment. The word “for” is doing significant work there. These are standards governing what a radar must be capable of doing when manufactured. They are directed at equipment designers and Flag State type-approval authorities, not at watchkeeping officers.

Section 5.7 of MSC.192(79) covers Radar Performance Optimization and Tuning. Its three clauses read as follows:

5.7.1: “Means should be available to ensure that the radar system is operating at the best performance.”

5.7.2: “An indication should be provided, in the absence of targets, to ensure that the system is operating at the optimum performance.”

5.7.3: “Means should be available (automatically or by manual operation) and while the equipment is operational, to determine a significant drop in system performance relative to a calibrated standard established at the time of installation.”

Every clause is in the passive voice and addressed to the manufacturer. “An indication should be provided” means the manufacturer must fit a performance monitoring capability. “Means should be available” means that capability must be built into the equipment. There is no addressee in any of these clauses who could be interpreted as an OOW, and no frequency of use is specified anywhere for operators.

The clause most often cited to justify a once-per-watch check is 5.7.2. Read in its document context — a manufacturer equipment standard — it says a PM must be fitted. It says nothing about how often an officer must activate it.

Clause 5.7.3 makes the solid-state radar position even clearer: the means to detect a performance drop can be “automatically or by manual operation.” A solid-state radar with built-in continuous diagnostics satisfies this clause entirely without any manual officer intervention whatsoever. For a ship fitted with that equipment, a SMS procedure requiring a manual PM test every watch is demanding an action that the regulation does not require and that the technology does not need.

Yet across the industry, the radar performance monitor ship-board requirement has been interpreted as a watch-by-watch obligation — a reading that does not survive contact with the source document.

What the ICS Bridge Procedures Guide actually says

The ICS Bridge Procedures Guide, Sixth Edition, is referenced in IMO convention footnotes and is recommended carriage on all vessels. When it speaks on bridge procedures, it speaks with weight.

Section 5.11.2 — Safe use of radar — says this: “The quality of the radar picture should be checked regularly. This may be automatic when using a performance monitor.”

Two things are embedded in that sentence. The word regularly is not once per watch. And the word may explicitly acknowledges that automatic performance monitoring — the kind built into solid-state radars — is a fully valid approach. The BPG does not frame the radar performance monitor ship check as something an officer must manually activate

The same section then states: “Clear weather gives an opportunity for watchkeepers to check radar target detection performance.”

An opportunity. Conditional on clear weather. The BPG is describing a check that should be done when conditions are suitable — open water, good visibility, a settled watch. Not one that must be logged at the start of every watch regardless of where the ship is.

Section 5.11.1 lists what the OOW should understand about performance monitoring, and directs them to “manufacturers’ guidelines” for the expected results — consistent with the MSC.192(79) approach of grounding the check in the equipment’s own calibration standard rather than a fixed frequency.

Section 4.22.2, on routine tests and checks, includes: “Built-in test facilities should be checked frequently, including alarm self-test functions.” Not once per watch. Frequently. And it covers all built-in test facilities — which for modern solid-state radars means the automated diagnostics that run continuously.

Nowhere in the ICS BPG 6th Edition does a once-per-watch performance monitor check appear as a required procedure. The guide is not silent on the subject — it addresses it directly and frames it as contextual, condition-dependent, and compatible with automatic monitoring.

The solid-state radar problem

A growing proportion of the merchant fleet is now fitted with solid-state radar systems — Furuno’s NXT series, the Kelvin Hughes SharpEye, and others. These use solid-state electronics rather than a magnetron to generate the radar pulse. Kelvin Hughes states explicitly: “No magnetron — minimal routine maintenance requirements” and “Built In Test Equipment (BITE) for automatic diagnostics.”

These radars satisfy MSC.192(79) 5.7.3 automatically. The built-in diagnostics constitute the “means available” for detecting performance drops. There is no manual PM procedure that maps onto this technology in the same way it maps onto a magnetron radar.

Yet on vessels fitted with these systems, the SMS procedure still reads: performance monitor to be checked once per watch. Officers make the entry because the column exists. The VDR shows that whatever they did, it was not a performance monitor test in any meaningful sense.

The magnetron, degrading over months against a calibration baseline, is a different piece of equipment from a solid-state transceiver with continuous automated diagnostics. A procedure written for one cannot simply be applied to the other.

The radar performance monitor ship requirement, as written in most SMS manuals, was designed around magnetron technology. Solid-state systems have made that assumption obsolete.

What this culture produces

The performance monitor log entry is, in my experience, the single most reliably false entry in a ship’s radar log.

I say that with no intent to criticise individual officers. When a procedure is written without regard for operational practicality, officers find the path of least resistance. The column gets filled. The VDR records that the screen never changed. Both things coexist quietly until a VDR audit or an incident investigation makes them visible.

The larger danger is what this does to the integrity of bridge records overall.

A log book and a radar watch record are documents that DPAs, incident investigators, Flag State inspectors and courts rely upon. When officers learn — as they do, very quickly — that the expected practice is to record things that were not done, that habit does not stay contained. It bleeds into position-fixing records. It bleeds into hours of rest. It bleeds into permit to work systems. The transition from writing what happened to writing what is expected is not a sudden decision — it is a gradual erosion, and it almost always begins with something small.

In VDR data, the pattern is consistent. A vessel with a habitual PM log entry that the radar screen never supports almost always has other patterns. The PM test is rarely the only thing.

When a procedure cannot be done, the entry still has to be written. That is a system failure, not a personal one.

What the record should actually reflect

Rather than a fixed-frequency obligation that officers cannot meaningfully perform, a PM record that has operational value looks different depending on the equipment fitted.

For magnetron radar systems, a meaningful PM check is conducted at a suitable time during an open-water watch — when the officer can give it proper attention and compare results against the calibration baseline. When conditions do not permit the formal test, the officer records what was actually observed: target detection quality and the maximum range achieved on known contacts. That is a genuine performance assessment.

For solid-state radar systems, the traditional magnetron-based PM process does not apply. Built-in diagnostics satisfy the regulatory requirement automatically. The PMS routine covers the review of those diagnostics. The radar log confirms the system was checked and found operational — without requiring an entry format designed for equipment the vessel does not carry.

The question worth asking of any PM entry in any log is a simple one: if the VDR was reviewed, would the screen activity support what is written here?

The VDR view

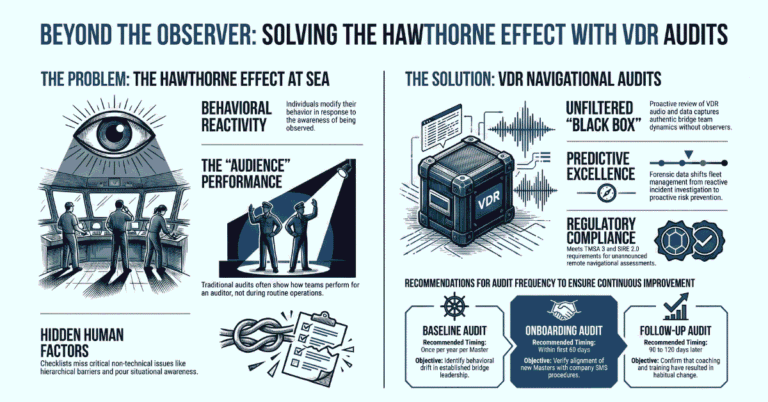

If your fleet carries once-per-watch PM entries in the radar log, a VDR audit of radar performance monitor ship records will tell you quickly whether those entries have any foundation

That finding has two uses. The first is corrective — understanding where procedure and practice have diverged, and why. The second is diagnostic — understanding whether the gap is isolated to the PM test, or whether it is part of a broader pattern of performative compliance on that vessel.

In my experience, it is rarely isolated.

The performance monitor log entry is a small thing. But it is a clear thing, and it is visible in the data. It opens a conversation that every DPA and fleet manager should be having: how many procedures in our SMS exist to satisfy an auditor rather than to protect a crew?

That conversation is worth having before the VDR data makes it unavoidable.

VDR Audit · RADAR PERFORMANCE · RECORDKEEPING· SIRE 2.0 · Navigational ASSESSMENT

Also in the VDR Series

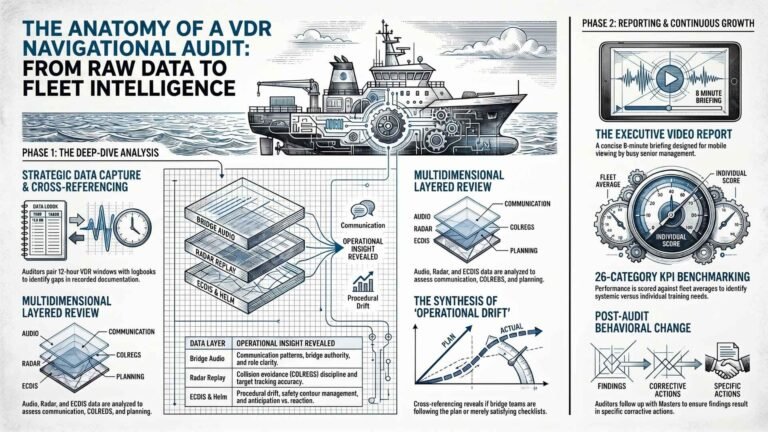

VDR Navigational Audits: What They Are, Why They Matter, and When to Do One

How a VDR Navigational Audit Works — A Step-by-Step Guide for Ship Managers

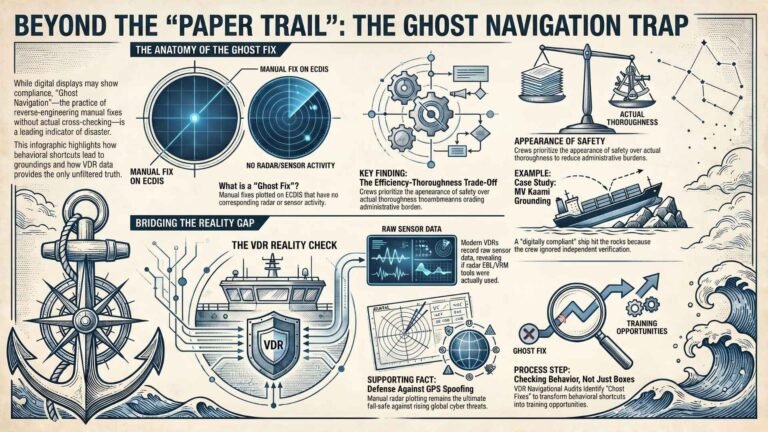

Ghost Fix: What the Bridge Team Does When the Auditor Has Gone Home

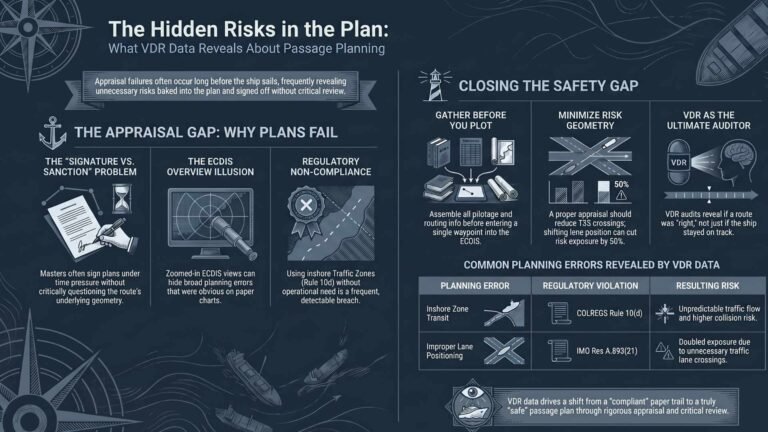

Passage Planning Mistakes Ships Make — And What the VDR Reveals