I am often asked by ship managers and owners what a VDR audit actually involves. Not in abstract terms — but practically. What do I look at first? What does the data tell me that a traditional inspection cannot? And what does a ship manager actually receive at the end?

It is a fair question and if you are considering commissioning a VDR review for your fleet, you deserve to know exactly what you are paying for.

This article walks through the process as I actually conduct it — from selecting the right passage to the final benchmarked report.

“Checklists confirm what was done. VDR data reveals how it was actually done. The gap between the two is where the risk lives.”

The proactive use of VDR data for navigational assessment is not just good practice — it is specifically recommended by OCIMF as a tool for evaluating human element performance and is embedded in TMSA and SIRE 2.0 requirements.

The Steps of a VDR Navigational Audit

Step 1 — Selecting the Right Voyage

Not all voyages are equal.

VDR data is not stored indefinitely — most systems record a rolling 12-hour window that constantly overwrites itself. This means the data capture has to be deliberate and planned.

I work with the ship manager to identify an upcoming port call or critical passage in advance. Once the vessel has completed that operation, the Master is instructed to save and download the data before it is overwritten.

I suggest managers look for:

— Port approaches and departures — where workload is highest and procedural discipline is most tested

— Pilotage passages — where Master-Pilot coordination and bridge authority can be assessed

— Traffic separation schemes and congested waters — where COLREGS compliance and situational awareness are visible in the radar data

A 12-hour review window across two or three distinct operational scenarios tells me considerably more than 48 hours of open-ocean passage-keeping.

Step 2 — Documents We Request Alongside the Data

VDR data alone is only part of the picture.

The audio tells me what the bridge team said. But cross-referencing that audio against the bell book, the passage plan against the ECDIS track, rest hour records against watch schedules — that is where the real gaps become visible. A crew can sound professional on the bridge and still have a passage plan with incorrect air draft calculations. A Master can conduct a thorough pilot exchange and still have a bell book entry that records his arrival on the bridge 5 minutes before he actually arrived.

Alongside the VDR data and playback software, I request supporting documents for the period under review. These typically include the passage plan, bell book, deck logbook, pilot card, Master-Pilot Exchange checklist, pre-arrival and departure checklists, rest hour records, Masters Standing Orders and Night Orders, ECDIS familiarisation records, and the VDR Annual Performance Test report.

This list is not fixed. It depends on the company SMS, the type of vessel, and the operational activity being reviewed. A pilotage audit in a tidal port requires different supporting documents than a TSS transit audit on an open-coast passage. I advise the ship manager on exactly what is needed once the voyage segment has been selected.

What matters is the cross-referencing. Discrepancies between what the logbook records and what the VDR audio captures are among the most consistent findings across fleets — and they are invisible to any inspector who only reads the paperwork.

Step 3 — The Audio Review

This is where most people are surprised.

The VDR captures continuous bridge audio. What I am listening for is not dramatic — it is pattern. How does the OOW communicate? Is there a clear challenge-and-response between the watch officer and the helmsman? How is the Master-Pilot Exchange (MPX) conducted, did the Pilot simply take the conn without any meaningful exchange of information?

A few things I have observed repeatedly across audits:

Silence during critical manoeuvres. A vessel entering a confined channel with no verbal exchange between bridge team members is not a sign of a well-drilled, wordless crew. It is almost always a sign of confusion about roles — nobody is quite sure who is responsible for what.

Incomplete read-backs. The helmsman acknowledges a course change with “yes” instead of repeating the ordered heading. It sounds minor. Multiply it across a 4-hour pilotage in restricted visibility and you have a systemic communication failure waiting to become an incident.

Passive Master presence. The Master is on the bridge but not engaged. Long silences from the senior officer during a passage that objectively requires active oversight.

None of this appears in a checklist. It is only audible if you listen to what actually happened.

Step 4 — Radar Replay Analysis

The radar data provides a continuous, timestamped record of every target the bridge team encountered and how they responded to it.

I replay the radar picture for the selected periods and assess against COLREGS. What I am looking for is the decision point — at what range did the OOW take action? Was the action taken early and deliberate, or late and reactive? Was the vessel the give-way or stand-on, and did the bridge team understand which?

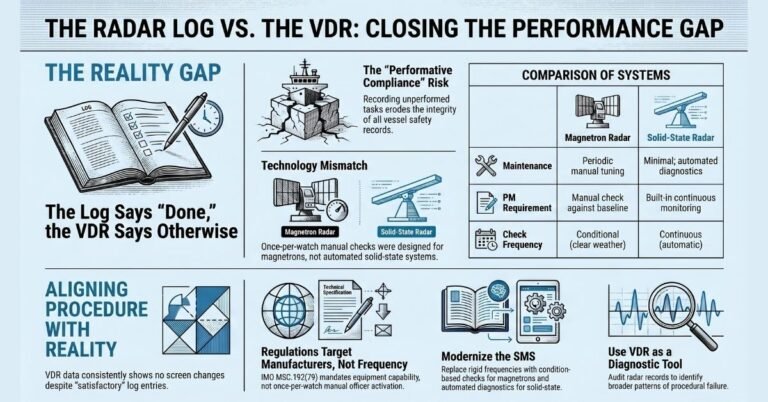

In congested waters, I look at how many targets were being tracked simultaneously and whether the team was applying systematic ARPA discipline or relying on visual assessment alone. I also check whether the radar was correctly set to sea-stabilised mode — ground stabilisation mode is a recurring finding across fleets and directly affects the accuracy of collision avoidance data.

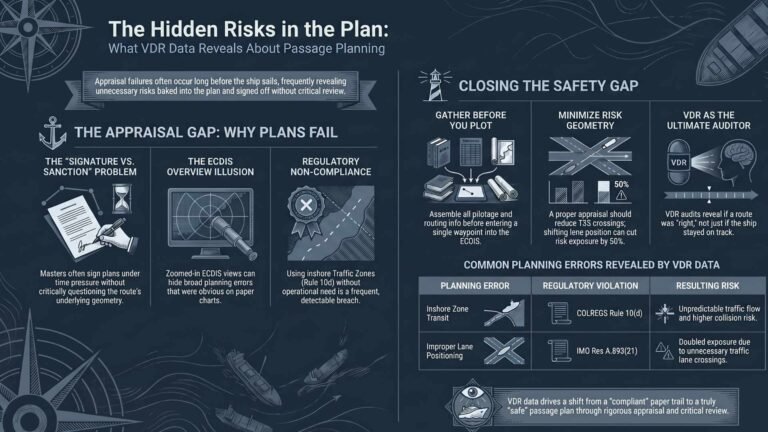

Step 5 — ECDIS Review

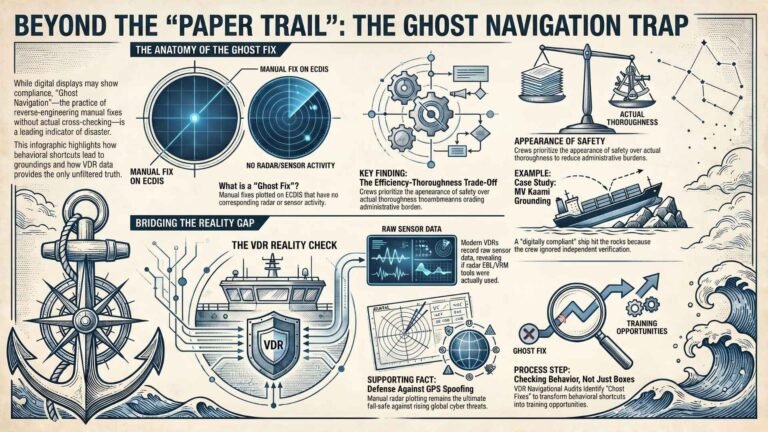

The ECDIS data tells me how the passage was planned, and whether the plan was actually followed.

I check the route as filed against the route as sailed. I look at how the ECDIS was configured — safety contour settings relative to actual tidal conditions, chart scale during critical passages, whether radar overlay was in use, and how alarms were being managed.

I also cross-reference the ECDIS against the passage plan documents. Whether parallel indexing was marked in the plan but never set up on either radar. Whether both ECDIS units were updated for the current passage or whether one was still showing the previous voyage’s settings. Whether the safety contour accounted for the tidal range at a MASD port or triggered continuous alarms that the bridge team simply ignored.

These are not dramatic failures. They are the kind of quiet procedural drift that accumulates over time and is never visible in a port-based inspection.

Step 6 — Helm Input and Speed Data

This data layer is underused and undervalued.

The pattern of helm inputs tells me about the quality of hand-steering during critical manoeuvres. Frequent, small corrections indicate an officer who is ahead of the ship. Large, late corrections indicate someone who is reacting rather than anticipating.

Speed data through congested passages tells me whether the Master is applying prudent seamanship or simply maintaining schedule.

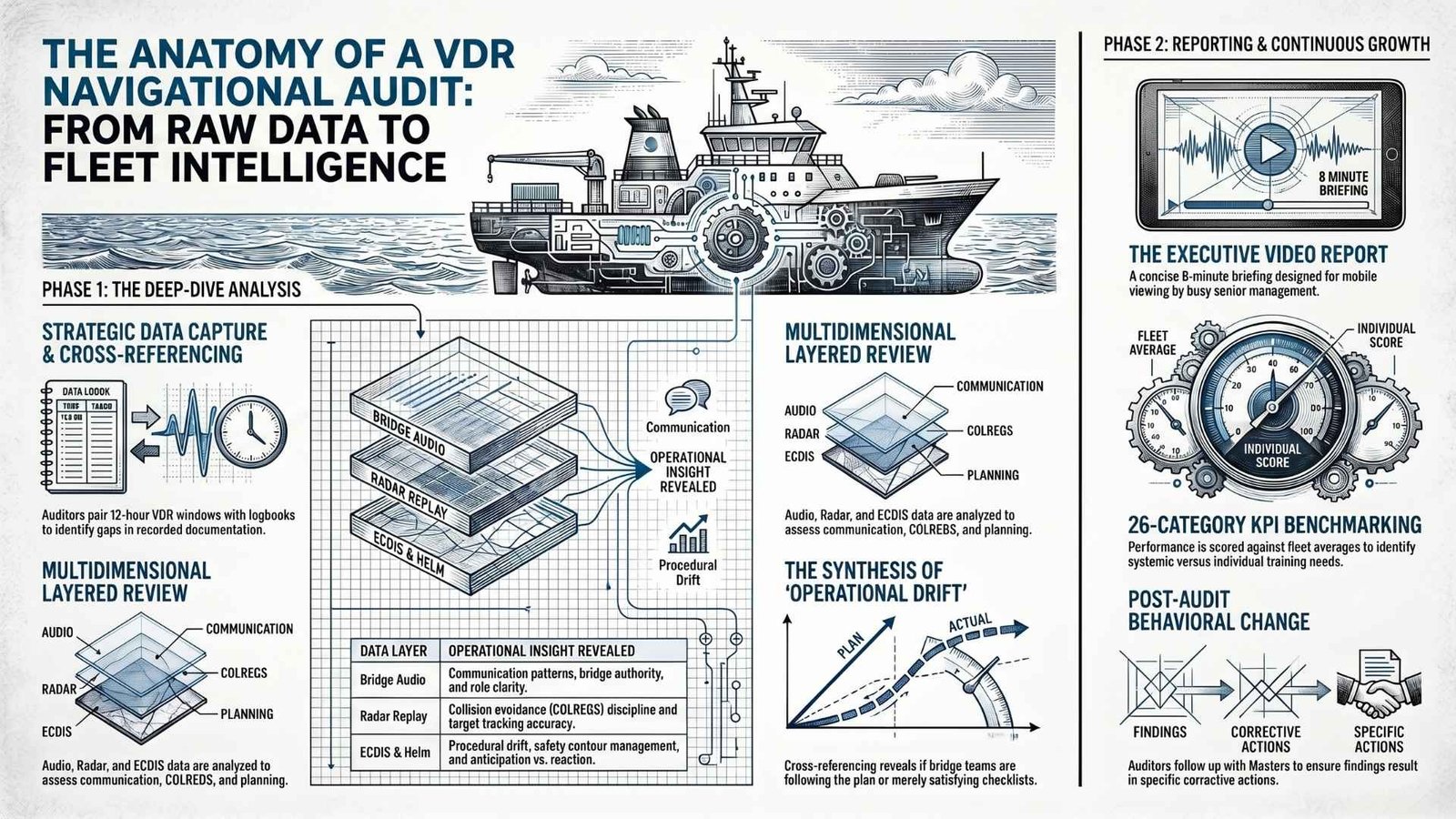

Step 7 — Cross-Referencing the Data Layers

The analysis becomes most powerful when everything is read together.

Audio tells me what the team said. Radar tells me what they encountered. ECDIS tells me how they planned. Helm data tells me how they responded. Documents tell me what was recorded versus what actually happened.

A bridge team that communicates well but has erratic helm inputs may have a training gap at the helmsman level rather than the officer level. A vessel where the ECDIS plan is professionally constructed but the radar replay shows no systematic target tracking suggests that passage planning has been decoupled from watchkeeping — the plan exists to satisfy the checklist, not to guide the passage.

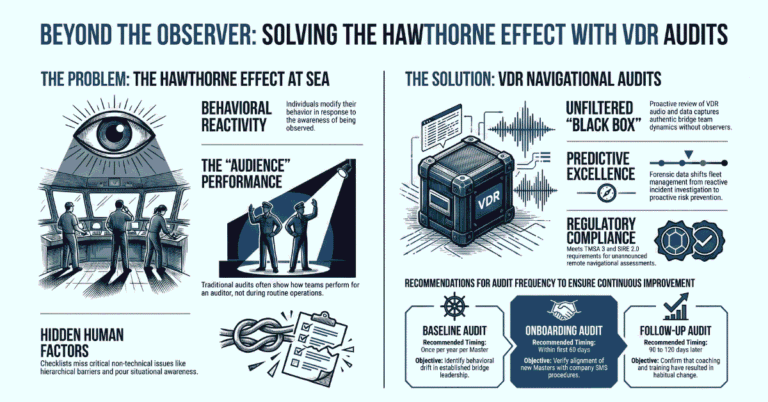

This is the level of nuance that no pre-announced onboard inspection can reach. When an auditor boards, behaviour changes. The Hawthorne Effect is real — crews perform differently when observed. The VDR captures what happened when nobody was watching.

“The VDR captures what happened when nobody was watching. That is the only data that tells you the truth.”

Step 8 — The Report

The report is not a deficiency list. A deficiency list is what you get from a PSC officer — a snapshot of what was visible on a given day. That is not what a VDR audit is for.

What I produce is a structured analytical report covering:

Executive Summary — a clear overview of the passage, the overall assessment, and the key findings, written for the DPA or senior management who need the headline picture without working through the full detail.

Timeline — a timestamped log of the passage as it actually occurred, cross-referenced against the bell book and deck log.

Bridge Team Experience Matrix — ranks, nationalities, sea time in rank, and time with the company for each officer on watch. Experience context matters when interpreting observations.

Human Factor Indicators — each officer assessed across four dimensions: navigational skills, technical ability, teamwork, and verbal communication. Rated on a defined scale from Exceeded through to Not Expected.

KPI Scoring across 26 categories — Command and Control, BRM, Passage Planning, Watchkeeping, COLREGS, ECDIS use, Lookout, Helm, Master-Pilot Exchange (MPX), and more. Each category scored out of 5, producing an overall percentage score with a defined rating band — Exceptional, Excellent, Above Average, Average, or Below Average.

Areas of Concern — each observation written with the specific timestamps so the Master can locate and review the exact moments, the root cause (Oversight, Lack of Knowledge, or Procedural), SIRE reference, and category.

Good Practices identified — because a constructive audit acknowledges what the team is doing well, not only what needs improvement.

Status against common fleet observations — each vessel’s findings checked against our database of recurring observations across all audited vessels.

Training Needs identified — specific, actionable recommendations at the shipboard level, not generic regulatory boilerplate.

Near Misses identified — where the data reveals situations that did not escalate but carried genuine risk.

Every report I write is one I would be comfortable sharing directly with the Master. If I am not prepared to defend an observation to the person it concerns, it does not belong in the report.

Step 9 — The Executive Video Report

The full written report is comprehensive by design — it needs to be, because the DPA and the Master need the detail. But I am aware that a 35-page analytical report sent to a senior manager at a ship management company is likely to sit in an inbox.

So alongside every written report, I produce a short Executive Video Report.

The format is deliberate. It runs through the key findings in sequence — ship particulars, the passage timeline, VDR equipment status, bridge team experience, the Human Factor assessment, KPI scores, each area of concern with the supporting evidence, training needs identified, and the overall rating. Everything a senior manager needs to understand what happened on that vessel, without opening a single page of the full report.

The reason I built it this way is straightforward. A DPA or fleet director managing multiple vessels across multiple time zones does not always have 45 minutes at a desk to work through a report. But they do have 8 minutes between meetings. They have a commute. They have a phone.

The video report is designed to be watched on a phone, on the move, and to leave the viewer with a clear picture of how that vessel’s bridge team performed — what was good, what needs attention, and what the training priorities are.

It is not a substitute for the written report. It is the executive summary that actually gets watched.

Step 10 — Fleet Benchmarking

This is where the real value accumulates over time — and where a single audit becomes a fleet management tool.

Each vessel’s KPI scores and area-of-concern counts are benchmarked against our full database of audited vessels. This produces two comparisons a DPA actually needs: how this vessel’s bridge team performed against the fleet average across each KPI category, and how individual officers compare against each other.

A vessel scoring 69% overall against a fleet average of 64% tells you something. A vessel where the 2nd Officer has five areas of concern against the Master’s zero tells you where to direct training. A fleet where Passage Planning consistently scores below the fleet average across multiple vessels tells you the problem is not individual — it is systemic, and the SMS needs review.

Without benchmarking, an audit is a one-off snapshot. With it, it becomes a continuous improvement programme.

Step 11 — What Happens After the Report

This is the part most auditors skip.

The report lands on the Managers’s desk. The Manager forwards it to the Master. The Master reads it, acknowledges it, files it. Three months later, nothing has changed.

I follow up. I discuss the findings with the Manager and where appropriate directly with the Master. I help frame the corrective actions so they are specific enough to change behaviour — not vague enough to be ignored.

And when the vessel’s next VDR data comes in, I can benchmark. I can tell the ship manager whether the observations from the previous review have been addressed or whether the same patterns are recurring.

Ready to Understand How Your Fleet Actually Operates?

If you manage vessels and want an objective picture of bridge team performance — not based on what crews do when an auditor is aboard, but on what actually happens at sea — I would welcome a conversation.